- #Python download file from url and save to directory how to

- #Python download file from url and save to directory serial

- #Python download file from url and save to directory code

- #Python download file from url and save to directory zip

To download the list of URLs to the associated files, loop through the iterable ( inputs) that we created, passing each element to download_url. Print('Exception in download_url():', e) Download multiple files with a Python loop

#Python download file from url and save to directory serial

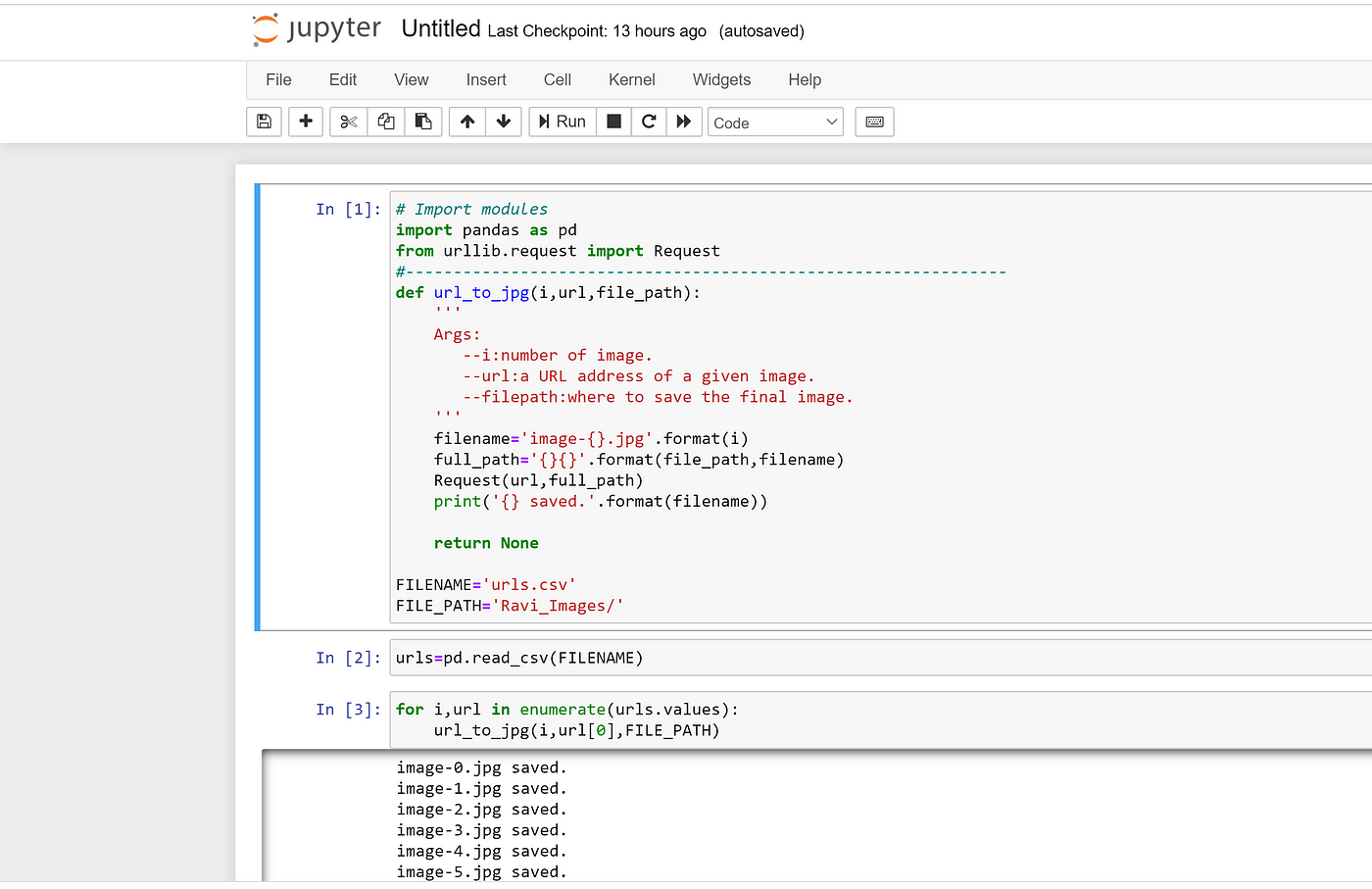

We can now use this function to download files in serial (using a loop) and in parallel. It does the actual work of downloading and file creation. The download_url function is the meat of our code. If an exception occurs a message is printed. When the file is written the URL and download time are returned. Now create a try statement in which the URL is retrieved and written to the file after it is created. The elements are assigned to variables ( url and fn) for readability. This argument will be an iterable (list or tuple) where the first element is the URL to download ( url) and the second element is the filename ( fn). We’ll pass one argument ( arg) to download_url. Now that we have specified the URLs to download and their associated filenames, we need a function to download the URLs ( download_url). inputs = zip(urls, fns) Function to download a URL This way we can pass a single argument (the tuple) that contains two pieces of information. Each tuple in the list will contain two elements a URL and the download filename for the URL.

#Python download file from url and save to directory zip

So we’ll zip the urls and fns lists together to get a list of tuples. To download a file we’ll need to pass two arguments, a URL and a filename. Multiprocessing requires parallel functions to have only one argument (there are some workarounds, but we won’t get into that here).

#Python download file from url and save to directory code

Given your application, you may want to write code that will parse the input URL and download it to a specific directory.

I’ve hardcoded the filenames in a list for simplicity and transparency. Here, I’m downloading the files to the Windows ‘Downloads’ directory.

urls = ['',Įach URL must be associated with its download location. In other applications, you may programmatically generate a list of files to download. Here, I specify the URLs to four files in a list. I’ll demonstrate parallel file downloads in Python using gridMET NetCDF files that contain daily precipitation data for the United States. import requestsįrom multiprocessing.pool import ThreadPool Define URLs and filenames The time module is also part of the Python standard library. We’ll also import the time module to keep track of how long it takes to download individual files and compare performance between the serial and parallel download routines. The requests and multiprocessing modules are both available from the Python standard library, so you won’t need to perform any installations. Import modulesįor this example, we only need the requests and multiprocessing Python modules to download files in parallel. The code in this tutorial uses only modules available from the Python standard library, so no installations are required.

#Python download file from url and save to directory how to

The tutorial demonstrates how to develop a generic file download function in Python and apply it to download multiple files with serial and parallel approaches. With a parallel file download routine, you can download multiple files simultaneously and save a considerable amount of time. This serial approach can work well with a few small files, but if you are downloading many files or large files, you’ll want to use a parallel approach to maximize your computational resources. The easiest way to download files is using a simple Python loop to iterate through a list of URLs to download. There are several ways for automating file downloads in Python. Automating file downloads can save a lot of time.